Classify Hurricanes And Typhoons Using Atlantic HURDAT2 and NE/NC Pacific HURDAT2 Dataset

- realcode4you

- Jun 21, 2021

- 3 min read

Requirement:

Dataset Description:

The NHC publishes the tropical cyclone historical database in a format known as HURDAT, short for HURricane DATabase. These databases (Atlantic HURDAT2 and NE/NC Pacific HURDAT2) contain six-hourly information on the location, maximum winds, central pressure, and (starting in 2004) size of all known tropical cyclones and subtropical cyclones.

Columns:

ID

Name

Date

Time

Event

Status

Latitude

Longitude

Maximum Wind

Minimum Pressure

Low Wind NE

Low Wind SE

Low Wind SW

Low Wind NW

Moderate Wind NE

Moderate Wind SE

Moderate Wind SW

Moderate Wind NW

High Wind NE

High Wind SE

High Wind SW

High Wind NW

Problem Statement

You are provided with two data sets “Pacific_train.csv” and “Pacific_test.csv” having hurricane and typhoon information.

***Note: No train-test splitting should be done as test data is already provided***

You are required to make a multi-class classification model where the target variable is “Status” to classify hurricanes and typhoons into the correct category.

Carry out the following tasks and select the appropriate features and make classification models using the following algorithms having a 10-fold cross validation score :

Decision Trees ( Applying different criterion and choosing the best )

Random Forest

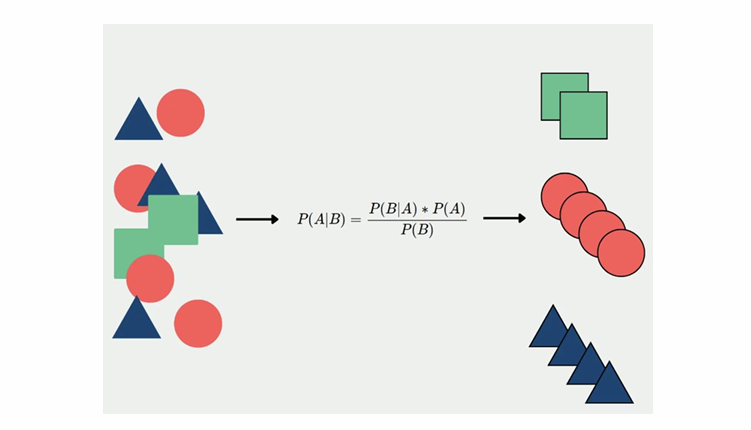

Naive Bayes

SupportVectorClassfier

HINT: Use correlation to select the most appropriate features.

The features [Maximum Wind, Minimum Pressure, Low Wind NE] can be used for the model fitting

Write python functions for the following and compare the performance of algorithms used above:

Recall

Precision

Accuracy

The Recall, Precision is to be computed for each label and algorithm pair

1. Which is the best model?

Hint: Implement all the above-mentioned models and then calculate the value of recall, precision and accuracy of each of them to finally select the best model.

NOTE: You MUST implement all the 4 models mentioned above in the question.

NOTE: You must use the training dataset to train the model and the testing dataset to check the accuracy and confusion matrix.

NOTE: Do not use any hyperparameters of any model.

Training Data:---> res/training/Pacific_train.csv

Test Data:----> res/validation/Pacific_test.csv

Final Output Sample:

NOTE: Let's say Naive Bayes Algorithm is the best algorithm for the above scenario with accuracy 0.7, then print GaussianNB(Name of Naive Bayes function in sklearn) and its respective accuracy score in a CSV file in the above-mentioned format.

Output Format:

Perform the above operations and write your output to a file named output.csv, which should be present at the location output/output.csv

output.csv should contain the answer to each question on consecutive rows.

***Note: Write the code only inside solution() function and do not pass any additional arguments. For predefined stub refer stub.py***

Note: This question will be evaluated based on the number of test cases that your code passes.

Solution:

Import Libraries

import pandas as pd

import matplotlib.pyplot as plt

from sklearn import tree

from sklearn.naive_bayes import GaussianNB

from sklearn.ensemble import RandomForestClassifier

from sklearn import svm

from sklearn import preprocessing

from sklearn.model_selection import cross_val_score

from sklearn.metrics import classification_report

Read Data

df=pd.read_csv('Pacific Training dataset.csv')

df.dropna(inplace=True)

df.head()Output

df_test=pd.read_csv('Pacific Test dataset.csv')

df_test.dropna(inplace=True)

df_test.head()Output

df.info()Output

<class 'pandas.core.frame.DataFrame'> Int64Index: 15681 entries, 0 to 15680 Data columns (total 22 columns): # Column Non-Null Count Dtype --- ------ -------------- ----- 0 ID 15681 non-null object 1 Name 15681 non-null object 2 Date 15681 non-null int64 3 Time 15681 non-null int64 4 Event 15681 non-null object 5 Status 15681 non-null object 6 Latitude 15681 non-null object 7 Longitude 15681 non-null object 8 Maximum Wind 15681 non-null int64 9 Minimum Pressure 15681 non-null int64 10 Low Wind NE 15681 non-null int64 11 Low Wind SE 15681 non-null int64 12 Low Wind SW 15681 non-null int64 13 Low Wind NW 15681 non-null int64 14 Moderate Wind NE 15681 non-null int64 15 Moderate Wind SE 15681 non-null int64 16 Moderate Wind SW 15681 non-null int64 17 Moderate Wind NW 15681 non-null int64 18 High Wind NE 15681 non-null int64 19 High Wind SE 15681 non-null int64 20 High Wind SW 15681 non-null int64 21 High Wind NW 15681 non-null int64 dtypes: int64(16), object(6) memory usage: 2.8+ MB

train = df.select_dtypes(exclude= 'object')

test = df_test.select_dtypes(exclude= 'object')le = preprocessing.LabelEncoder()

target = le.fit_transform(df.Status)

target_test = le.fit_transform(df_test.Status)len(X),len(Y),len(target)Output

(15681, 10455, 15681)

X= train

Y= target---

--contact Us to get complete solution

--

If you need any programming assignment help in Machine Learning, Machine Learning project or Machine Learning homework or need complete solution of above problem then we are ready to help you.

Send your request at realcode4you@gmail.com and get instant help with an affordable price.

We are always focus to delivered unique or without plagiarism code which is written by our highly educated professional which provide well structured code within your given time frame.

If you are looking other programming language help like C, C++, Java, Python, PHP, Asp.Net, NodeJs, ReactJs, etc. with the different types of databases like MySQL, MongoDB, SQL Server, Oracle, etc. then also contact us.

Comments